Google introduceert met Gemini een AI game-changer

Afgelopen jaar heeft OpenAI, met hun baanbrekende ChatGPT, de wereld van AI opgeschud. Ondertussen bleef Google, bekend als een van de pioniers in AI-technologie, enigszins uit de schijnwerpers. Maar met de onthulling van game-changer Gemini, lijken ze klaar om een enorme sprong voorwaarts te maken!

The game is on!

Deze week introduceerde Google Gemini. In tegenstelling tot de huidige taalmodellen zoals GPT-4, is Gemini een ‘multimodal AI-model’ dat in staat is om informatie uit verschillende bronnen real-time te verwerken en te begrijpen, waaronder tekst, afbeeldingen, video, audio en code.

De introductie ziet er heel indrukwekkend uit en belooft toepassingen die we nog niet eerder gezien hebben met ChatGPT of andere AI-tools. Terwijl de indruk gewekt wordt dat dit een real time demo is hoe Gemini werkt, blijkt dit al snel na de introductie niet helemaal zo te zijn. De video is bewerkt en er waren geen echte gesproken prompts. Google geeft aan dat “alle gebruikersprompts en outputs in de video echt zijn maar ingekort om de video beknopt te houden (lees: o.a. de extra promps die nodig waren voor deze output zijn achterwege gelaten). De video illustreert hoe de multimodale gebruikerservaringen die met Gemini zijn gebouwd eruit zouden kunnen zien. We hebben hem gemaakt om ontwikkelaars te inspireren”.

Bekijk deze 6 minuten introductievideo om een eerste indruk te krijgen.

Waarvoor kan Gemini gebruikt worden?

Door de multimodaliteit is Gemini een krachtige tool voor een breed scala aan toepassingen, waaronder:

- Natural language processing (NLP): Gemini kan tekst begrijpen en verwerken, en kan worden gebruikt voor taken zoals vertalingen, samenvattingen en het beantwoorden van vragen.

- Computer vision (CV): Gemini kan afbeeldingen en video’s begrijpen en verwerken, en kan worden gebruikt voor taken zoals objectherkenning, gezichtsherkenning en patroonherkenning.

- Audio processing (AP): Gemini kan audio begrijpen en verwerken, en kan worden gebruikt voor taken zoals spraakherkenning, muziekanalyse en geluidssynthese.

- Code understanding (CU): Gemini kan code begrijpen en verwerken, en kan worden gebruikt voor taken zoals codeanalyse, codegeneratie en code-assistentie.

Hoe presteert Gemini ten opzichte van andere AI-modellen zoals GPT-4?

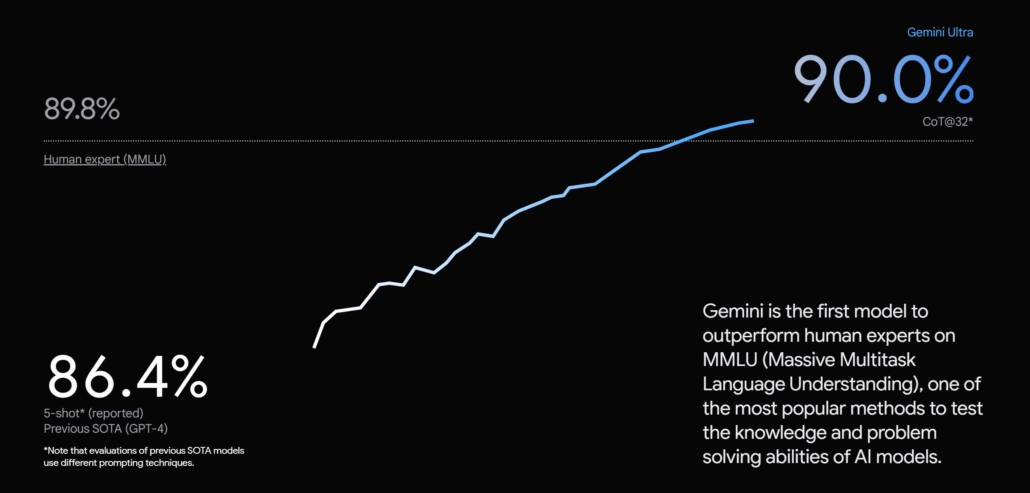

Volgens Google is Gemini gebaseerd op een van de grootste en meest geavanceerde AI-modellen ter wereld en presteert het ook beduidend beter dan andere AI-modellen, waaronder GPT-4.

Zo scoorde Gemini in een test op het gebied van natuurlijke taalverwerking (NLP) 20% beter dan GPT-4 en in een test op het gebied van computer vision (CV) scoorde Gemini 15% beter dan GPT-4.

Met een score van 90,0% is Gemini Ultra volgens Google ook het eerste model dat beter presteert dan menselijke experts op MMLU (massive multitask language understanding), waarbij een combinatie van 57 onderwerpen zoals wiskunde, natuurkunde, geschiedenis, rechten, geneeskunde en ethiek wordt gebruikt om zowel de wereldkennis als het probleemoplossend vermogen te testen.

Belangrijke verschillen tussen Gemini en GPT-4 (volgens Google)

- Multimodaliteit: Gemini is een multimodal AI-model, wat betekent dat het in staat is om informatie uit verschillende bronnen te verwerken en te begrijpen. Zo kan het bijvoorbeeld tekst vertalen, rekening houdend met de context van de afbeelding die aan de tekst is gekoppeld. Objecten in een afbeelding identificeren, rekening houdend met de tekst die over het object is geschreven. Code schrijven die een bepaalde taak uitvoert, rekening houdend met de audio-instructies die aan de code zijn gekoppeld. GPT-4 is een taalmodel, wat betekent dat het zich alleen op tekst kan concentreren.

- Grootte en complexiteit: Gemini is het grootste en meest geavanceerde AI-model ter wereld. GPT-4 is een groot taalmodel, maar het is niet zo groot of complex als Gemini. Gemini is gebaseerd op 1,56 biljoen parameters, GPT-4 op 1,5 miljard parameters.

- Verschillende versies: Gemini is beschikbaar in verschillende versies, met verschillende capaciteiten en mogelijkheden. GPT-4 is momenteel alleen beschikbaar in één versie.

Gemini wordt eerst geïntegreerd met de chatbot Bard, daarna volgen andere Google applicaties zoals Pixel 8 Pro, Search, Ads, Chrome en Duet AI. De hoeveelheid applicaties en data waar Google toegang toe heeft (denk ook aan Gmail, YouTube, Next camera en Google maps), geeft een enorme potentie en unieke positie ten opzichte van andere partijen zoals OpenAI.

Het wordt eerst in 170 landen buiten Europa uitgerold, dus we moeten nog even geduld hebben totdat wij het ook in Nederland kunnen gaan gebruiken.

Een kanttekening is dat Gemini’s Ultra model vergeleken wordt met GPT-4 en waar het beter scoort dit slechts enkele procentpunten zijn. De exacte werking van Google’s top AI-model is nog ongewis en wordt naar verwachting begin 2024 pas uitgerold, terwijl GPT-4 al sinds maart 2023 beschikbaar is. OpenAI is achter de schermen al lange tijd met de ontwikkeling van GPT-5 bezig, dus het wordt interessant te zien wat hiervan de toepassingen gaan zijn en wanneer dit wordt uitgerold.

Bekijk hier meer over de Gemini lancering.

Maak iedere week een sprong vooruit in je marketing AI transformatie

Elke vrijdag brengen wij je de meest actuele inzichten, nieuws en praktijkvoorbeelden over de impact van AI in de marketingwereld. Of je nu je marketing efficiency wilt verbeteren, klantbetrokkenheid wilt verhogen, je marketingstrategie wilt aanscherpen of je bedrijf digitaal wilt transformeren, ‘Marketing AI Friday’ is jouw wekelijkse gids.

Meld je gratis aan voor Marketing AI Friday.